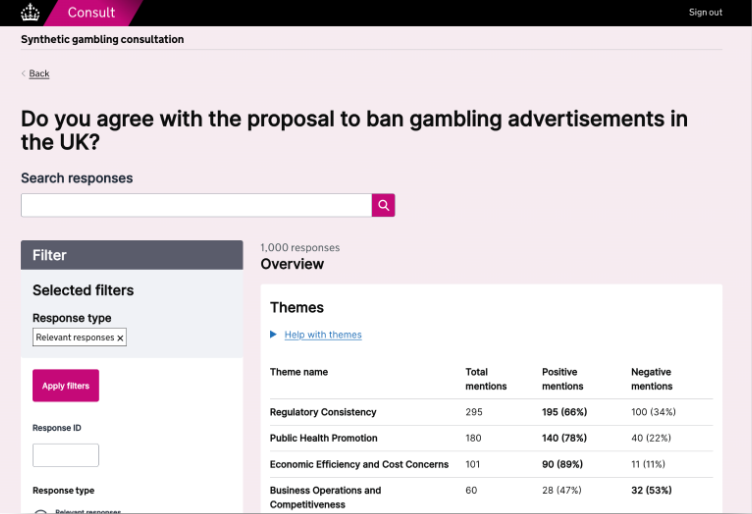

As first reported by The Guardian , the UK has begun rolling out a suite of AI tools known as “Humphrey” across the public sector, aiming to boost civil service efficiency. These tools are powered by large language models from OpenAI, Anthropic, and Google.

All civil servants in England and Wales are set to receive training on using these tools, with the government adopting a pay-as-you-go model via existing cloud service contracts, allowing flexibility in choosing among the big tech providers. However, this model has prompted concerns about the growing influence of private tech firms on public policy and administration.

Source: ai.gov.uk.

The rollout coincides with escalating debates about the use of copyrighted materials to train AI models. The government’s data bill, which allows copyrighted content to be used unless creators opt out, recently passed its final stage in the House of Lords—despite strong opposition from figures like Elton John, Paul McCartney, and Kate Bush. Critics argue that the same AI models built on uncompensated creative work are now being deeply embedded into government operations, potentially creating a regulatory conflict of interest. Ed Newton-Rex, CEO of Fairly Trained and the person who obtained information on Humphrey through a freedom of information request, warned that it is inappropriate for the government to rely so heavily on companies it may also need to regulate.

Another layer of concern is the reliability of AI outputs. Newton-Rex emphasized that AI tools are known for producing false information—so-called “hallucinations”—and suggested that the government should keep transparent records of errors made by AI systems to ensure continued evaluation of their performance. Shami Chakrabarti, a Labour peer and civil liberties advocate, also highlighted the risks of automated bias and pointed to past public sector IT failures, such as the Horizon scandal, as cautionary examples.

Government departments have defended their AI strategy, noting that tools like AI Minute and Redbox significantly cut down administrative tasks at minimal cost. A spokesperson for the Department for Science, Innovation and Technology stated that using AI does not impair the government’s regulatory role, drawing a parallel to how the NHS both buys and regulates pharmaceuticals. Officials also point to a published AI playbook and ongoing internal evaluations as safeguards, while maintaining that the use of these tools is being shaped by in-house experts to keep costs low and maintain control.

The UK government’s Humphrey AI initiative promises administrative efficiency, but as first reported by The Guardian, it also raises critical questions about public accountability, copyright ethics, and the expanding footprint of big tech in public governance. As AI continues to be embedded in state functions, a balance must be struck between innovation and oversight to ensure that public interest remains protected.